Evaluation of an information retrieval system (a search engine, for example) generally focuses on two things:

1. How relevant are the retrieved results? (precision)

2. Did the system retrieve many of the truly relevant documents? (recall)

For those that aren’t familiar, I’ll explain what precision and recall are, and for those that are familiar, I’ll explain some of the confusion in the literature when comparing precision-recall curves.

Geese and airplanes

Suppose you have an image collection consisting of airplanes and geese.

You want your system to retrieve all the airplane images and none of the geese images.

Given a set of images that your system retrieves from this collection, we can define four accuracy counts:

True positives: Airplane images that your system correctly retrieved

True negatives: Geese images that your system correctly did not retrieve

False positives: Geese images that your system incorrectly retrieved, believing them to be airplanes

False negatives: Airplane images that your system did incorrectly did not retrieve, believing them to be geese

Using the terms I just defined, in this example retrieval, there are three true positives and one false positive. How many false negatives are there? How many true negatives are there?

There are two false negatives (the airplanes that the system failed to retrieve) and four true negatives (the geese that the system did not retrieve).

Precision and recall

Now, you’ll be able to understand more exactly what precision and recall are.

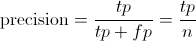

Precision is the percentage true positives in the retrieved results. That is:

where n is equal to the total number of images retrieved (tp + fp).

Recall is the percentage of the airplanes that the system retrieves. That is:

In our example above, with 3 true positives, 1 false positive, 4 true negatives, and 2 false negatives, precision = 0.75, and recall = 0.6.

75% of the retrieved results were airplanes, and 60% of the airplanes were retrieved.

Adjusting the threshold

What if we’re not happy with that performance? We could ask the system to return more examples. This would be done be relaxing our threshold of what we want our system to consider as an airplane. We could also ask our system to be more strict, and return fewer examples. In our example so far, the system retrieved four examples. That corresponds to a particular threshold (shown below by a blue line). The system retrieved the examples that appeared more airplane-like than that threshold.

We can move that threshold up and down to get a different set of retrieved documents. At each position of the threshold, we would get a different precision and recall value. Specifically, if we retrieved only the top example, precision would be 100% and recall would be 20%. If we retrieved the top two examples, precision would still be 100%, and recall will have gone up to 40%. The following chart gives precision and recall for the above hypothetical ordering at all the possible thresholds.

| Retrieval cutoff | Precision | Recall |

| Top 1 image | 100% | 20% |

| Top 2 images | 100% | 40% |

| Top 3 images | 66% | 40% |

| Top 4 images | 75% | 60% |

| Top 5 images | 60% | 60% |

| Top 6 images | 66% | 80% |

| Top 7 images | 57% | 80% |

| Top 8 images | 50% | 80% |

| Top 9 images | 44% | 80% |

| Top 10 images | 50% | 100% |

Precision-recall curves

A good way to characterize the performance of a classifier is to look at how precision and recall change as you change the threshold. A good classifier will be good at ranking actual airplane images near the top of the list, and be able to retrieve a lot of airplane images before retrieving any geese: its precision will stay high as recall increases. A poor classifier will have to take a large hit in precision to get higher recall. Usually, a publication will present a precision-recall curve to show how this tradeoff looks for their classifier. This is a plot of precision p as a function of recall r.

Average precision

Rather than comparing curves, its sometimes useful to have a single number that characterizes the performance of a classifier. A common metric is the average precision. This can actually mean one of several things.

Average precision

Strictly, the average precision is precision averaged across all values of recall between 0 and 1:

That’s equal to taking the area under the curve. In practice, the integral is closely approximated by a sum over the precisions at every possible threshold value, multiplied by the change in recall:

where N is the total number of images in the collection, P(k) is the precision at a cutoff of k images, and delta r(k) is the change in recall that happened between cutoff k-1 and cutoff k.

In our example, this is (1 * 0.2) + (1 * 0.2) + (0.66 * 0) + (0.75 * 0.2) + (0.6 * 0) + (0.66 * 0.2) + (0.57 * 0) + (0.5 * 0) + (0.44 * 0) + (0.5 * 0.2) = 0.782.

Notice that the points at which the recall doesn’t change don’t contribute to this sum (in the graph, these points are on the vertical sections of the plot, where it’s dropping straight down). This makes sense, because since we’re computing the area under the curve, those sections of the curve aren’t adding any area.

Interpolated average precision

Some authors choose an alternate approximation that is called the interpolated average precision. Often, they still call it average precision. Instead of using P(k), the precision at a retrieval cutoff of k images, the interpolated average precision uses:

In other words, instead of using the precision that was actually observed at cutoff k, the interpolated average precision uses the maximum precision observed across all cutoffs with higher recall. The full equation for computing the interpolated average precision is:

Visually, here’s how the interpolated average precision compares to the approximated average precision (to show a more interesting plot, this one isn’t from the earlier example):

Further, there are variations on where to take the samples when computing the interpolated average precision. Some take samples at a fixed 11 points from 0 to 1: {0, 0.1, 0.2, …, 0.9, 1.0}. This is called the 11-point interpolated average precision. Others sample at every k where the recall changes.

Confusion

Some important publications use the interpolated average precision as their metric and still call it average precision. For example, the PASCAL Visual Objects Challenge has used this as their evaluation metric since 2007. I don’t think their justification is strong. They say, “the intention in interpolating the precision/recall curve in this way is to reduce the impact of the “wiggles” in the precision/recall curve”. Regardless, everyone compares against each other on this metric, so within the competition, this is not an issue. However, the rest of us need to be careful when comparing “average precision” values against other published results. Are we using the VOC’s interpolated average precision, while previous work had used the non-interpolated average precision? This would incorrectly show improvement of a new method when compared to the previous work.

Summary

Precision and recall are useful metrics for evaluating the performance of a classifier.

Precision and recall vary with the strictness of your classifier’s threshold.

There are several ways to summarize the precision-recall curve with a single number called average precision; be sure you’re using the same metric as the previous work that you’re comparing with.

Thank you. Your explanation is very clear!

Now I understand what Average Precision is. But what about Mean Average Precision? How can I obtain that value?

Mean Average Precision is used when you have several retrieval tasks and want to find a score that summarizes your performance across all of them. For example, you might have a large collection of images, but you’re interested in retrieving the cars, chairs, people, and lamps, each as a separate query. You would compute the average precision for your car classifier, average precision for your chair classifier, average precision for your people classifier, and average precision for your lamp classifier. Take the mean of these average precisions to get the Mean Average Precision.

Thank you!! This is the best explanation of average precision I have seen after doing weeks of research. I was able to understand the formula very well so again Thank you!

Also, I think I know why in some publication they call it average precision. I was reading this paper “are we ready for autonomous driving?” and they reference the PASCAL object detection recall paper, they use this formula for AP:

AP = (1/11) the summation of r =0 to r=1, Pinterp(r) (sorry I could attached put the image of the formula). By doing the 1/11 at the beginning I think they are able to obtain the average of the Interpolated average precision. However, I am not sure, it is just a theory.

Great! I’m happy the article was useful. Yes, the PASCAL challenges used interpolated precision. In early years, they took an 11-point sample to get the average. In later years, they evaluated the area exactly.

Very nice explanation on precision-recall graphs! Most helpful page I’ve seen on the web about this.

Have a question: what do you think is the best method to evaluate a retrieval system when you have multiple users issuing queries for only a single object from a given ranked list?

Users do issue multiple queries during their lifetime so I was considering bundling queries up into one “session” for this purpose. My issue is when doing this I may get users requesting for the same object within a session – not sure how to deal with that in a precision-recall calculation!

Thank you!

I don’t understand your question, though. If users are only given a single item in response to their query, it may be better to evaluate your system based on “precision at rank 1”. Simply put, that’s the percentage of time the highest ranked result is a correct result.

I don’t see how the multiple users problem complicates things, unless the queries interfere with each other somehow, evaluate your system from the viewpoint of an individual user.

This explanation was quite helpful and very clear. Thank you for composing it :)

your explanation is excellent, it will make a classical tutorial

Could u plz explain how to set threshold value of an image dataset for finding its precision and recall?

What type of classifier are you using?

Thank you , very nicely explained.

Thanks you, nice posts

Crystal clear explanation … thanks a lot :)

Thank you!!! This is the best explanation I have seen on precision and recall. I have a question… If the classifier is ideal, the false positives and false negatives would be zero. If there are five airplanes and five geese, and the classifier detects 5 airplanes most of the time. Ideally, the precision and recall would be 1. There can be a few of the use cases when the classifier works perfectly. In the curve, I see the precision reducing with increase in recall.

There can be a case where the classifier fails, true positives are very less. In that case, both precision and recall would be close to zero. Will the curve be increasing rather than decreasing?

You’re correct. You can see that happening between recall=0.3 and recall=0.7 in the last examples above (https://sanchom.files.wordpress.com/2011/08/interpolated-vs-approximated.png). In order to ignore those effects, “interpolated” average precision treats those regions as if they had constant precision equal to the highest precision observed at higher recalls.

You defined average precision through an integral. Do you by some chance have a reference for this definition? I am looking for a book or paper to cite. Thank you!

Here is a reference: http://arxiv.org/pdf/1310.5103.pdf. In case you or another reader is wondering why the integral makes sense, I would explain it like this. You can’t talk about average precision without stating *what* you’re averaging over. In this case, we’re taking the average of precision over all recall values. That becomes the integral.

Another reference: Cummins, Ronan. “Predicting query performance directly from score distributions.” Information Retrieval Technology. Springer Berlin Heidelberg, 2011. 315-326. http://dcs.gla.ac.uk/~ronanc/papers/cumminsAIRS11_2.pdf

thanks , I like this post.

Really great article! The graphs are helpful and make things clear for me!

This is indeed a great article! May I (with citation) use some of the figures in my thesis?

Really helpful. Thanks ! for clear explanation.

Thank you,it’s very helpful and clear.And I have another question.What should we set to be the threshold value in SSD?The nms_threshold or IOU between predicted bounding box and groud truth?

Hi, I think different settings for nms_threshold should be treated as separate algorithms variants, and generate a full precision-recall curve for each nms_threshold setting, where the threshold that you vary for the precision-recall curve is the threshold used for the final decision.

The IOU (intersection-over-recall) that is required for a prediction to count as a correct prediction should be fixed between all algorithms that you’re comparing. It’s often specified by the dataset creator or there may be a consensus among previous research that’s used the dataset. You need to keep it constant between algorithms in order to have comparable results.

Hi! Great article! Could you tell me how to solve it (goose-plane) with scikit learn?

y_true = np.array([1, 1, 0, 1, 0, 1, 0, 0, 0, 1])

y_scores = np.array(?????)

precision, recall, thresholds = precision_recall_curve(

y_true, y_scores)

What should I use for y_scores?

Thank you!

Hi! You could use any array of decreasing values. The score is an indication of how certainly the classifier thinks the thing is an airplane. The first thing in the list is most airplane-like, the last thing in the list is the least airplane-like, according to the classifier.

Hi,

Excellent article! :) I created a repository that evaluates object detectors using the most popular metrics, including mAP. I tested itexhaustively and results were carefully compared against the official implementations and they are exactly the same.

I will leave the link below in case anyone wants to use it: https://github.com/rafaelpadilla/Object-Detection-Metrics

Hi Sancho,

Thank you very much for creating this article to explicitly explain how threshold impacts Precision and Recall! I am starting a machine learning project that will apply Classification. The article really clarifies a lot of my doubts in measuring model performance. Very nice piece!

Here I have a question though:

In your Average Precision calculation, you said…

“where N is the total number of images in the collection, P(k) is the precision at a cutoff of k images, and delta r(k) is the change in recall that happened between cutoff k-1 and cutoff k.

In our example, this is (1 * 0.2) + (1 * 0.2) + (0.66 * 0) + (0.75 * 0.2) + (0.6 * 0) + (0.66 * 0.2) + (0.57 * 0) + (0.5 * 0) + (0.44 * 0) + (0.5 * 0.2) = 0.782.”

When looking into the precision-recall curve, plotting only starts from (1, 0.2). At cutoff P(1), the r(0) is undefined, so I guess the delta between r(1) and r(0) is also undefined? I am not quite sure about the first “(1 * 0.2)” you put there. Why do you add it to the calculation?

Your clarification would be appreciated!

Thank you!

r(0) is the recall when you return zero results. That recall is 0. Another way of understanding the first term is that it is a necessary part of the area under the curve (which is another way of viewing the integral, or its approximation in this sum).